Evaluation of HDR to LDR mapping techniques for object detection under extreme lighting conditions

As we have already stated earlier, retraining CNN based object detectors for HDR images is a challenging tasks because:

-

- There are no large scale annotated HDR datasets to train detection models.

- Annotations tools to annotate HDR images (for ground truth creation) do not exist.

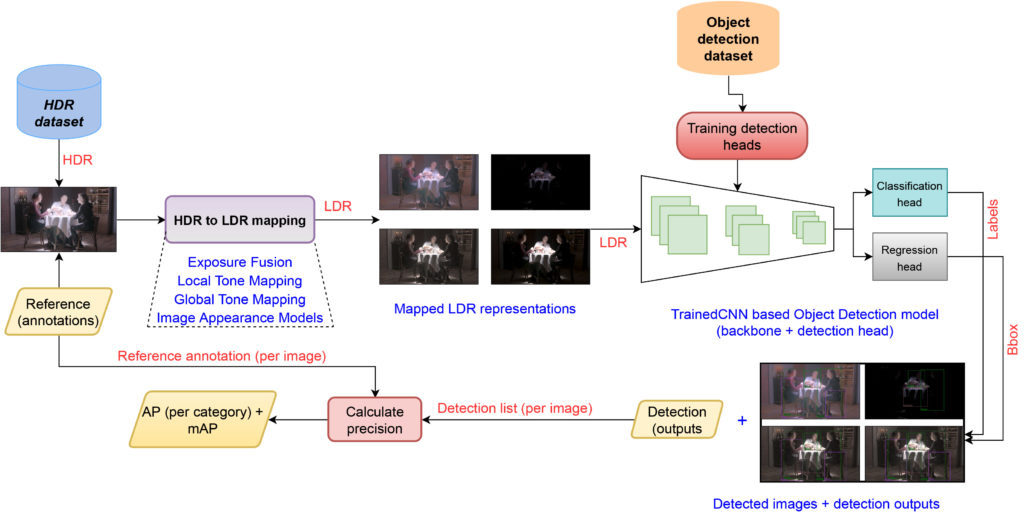

Given these constraints, the first part of the project was to find feasible ways to reuse existing detection models trained on existing LDR datasets such as Pascal VOC and MS COCO for HDR object detection. To use HDR data as input to LDR trained models, it was our endeavour to convert the HDR data to LDR format while trying to retain as much of the tonal qualities and dynamic range of the scene as possible.

In order to accomplish such a task, we decided to use existing HDR to LDR mapping techniques which can convert HDR data to its LDR form and subsequently feed the mapped LDR data to the CNN based detection algorithm for inference. However, to this date, there exists more than 5000 published/patented HDR to LDR mapping techniques.

To determine the feasibility of using mapped HDR data, the first task was to evaluate multiple HDR to LDR mapping techniques. For the purposes of this work, we decided to use 7 different HDR to LDR mapping techniques and conduct an evaluation of the mapping quality across 3 different object detection algorithms.

To accomplish such an evaluation, we first created a mini-dataset of approx. 1300 HDR images. The HDR images were first annotated by multiple annotators to create a ground truth dataset. Second, those 1300 images were fed to each of the 7 mapping techniques which resulted in mapped LDR images. The resultant mapped images were subsequently fed to each of the 3 detection models for inference.

The inference results were finally evaluated against the ground truth for average precision and recall scores. The evaluation results were used to determine the suitability of HDR to LDR mapping techniques and detection models for detecting objects under extreme lighting conditions using backward compatible approach.

For the detailed findings of this work, please refer to: https://ieeexplore.ieee.org/document/9144199

Evaluation of LDR to HDR mapping techniques for HDR object detection

The previous phase of evaluation was a backward compatible study to evaluate the feasibility of using existing HDR to LDR mapping techniques and existing LDR based object detection models for object detection. However, the backward compatible approach is not an ideal one for the following reasons:

-

- HDR to LDR using various tone mapping techniques, is a lossy process at its best. The lost information cannot be recovered and processed further.

- The end-to-end pipeline of HDR to LDR mapping followed by detection is too slow to be used for real-time applications.

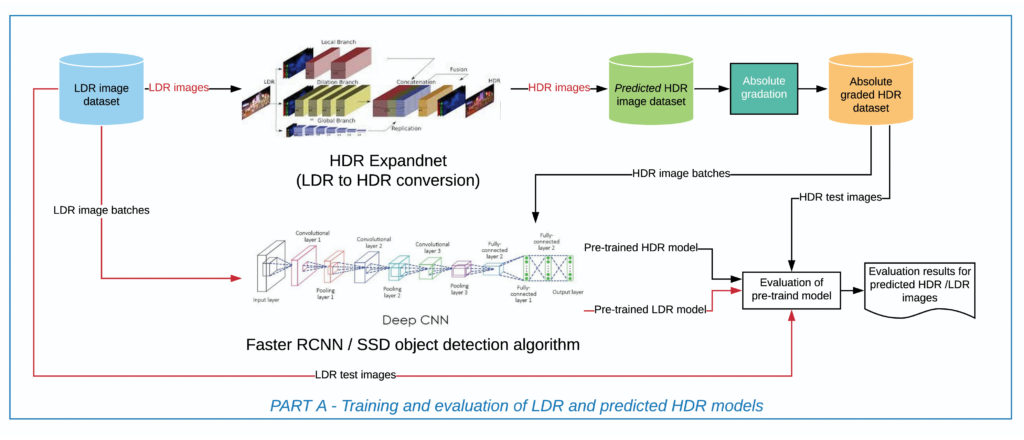

To mitigate these issues, the main goal of this work was to re-train object detectors using HDR data. However, as mentioned earlier, large scale HDR data is not available for training purposes. Therefore, it was decided to create pseudo-HDR data using existing inverse tone-mapping techniques.

Existing LDR to HDR mapping techniques (also known as expansion operators) can be classified into two types:

-

- Heuristics based operators and

- CNN based expansion operators

The figure in Part A demonstrates the pipeline that was used to evaluate existing expansion operators for LDR to HDR expansion.

For detailed evaluation results see the presentation (attached in home page).

After extensive evaluation, it was decided to one of the state-of-the-art CNN based expansion operators as it provided the best LDR to HDR prediction capabilities. Subsequently, we used the expansion operator to expand existing LDR object detection datasets such as Pascal VOC and MS COCO to pseudo-HDR datasets.

The pseudo-HDR datasets were then used to train multiple object detection models for HDR object detection.

Evaluation of HDR and LDR trained models on OOD dataset

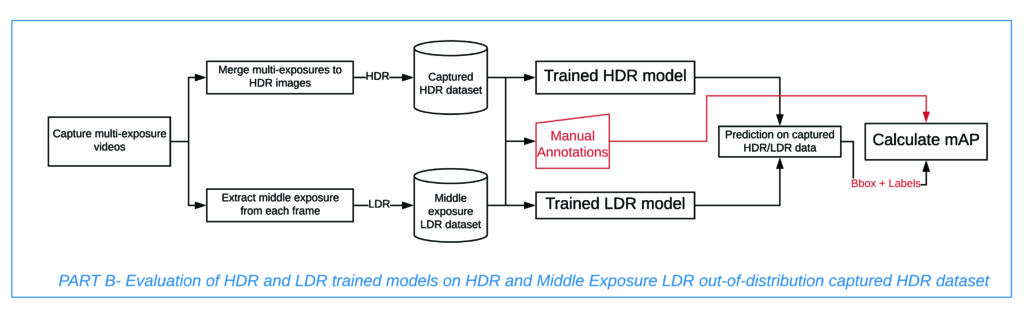

As stated above, the pseudo-HDR datasets were used to train object detection models. However, the difference between pseudo-HDR data and real-life captured data is significant. Therefore, we created a mini-dataset to evaluate the quality of the pseudo HDR trained against LDR trained model.

The pseudo-HDR trained models were tested with annotated real-HDR data and the LDR models were tested with the middle exposure of the HDR data.

Results demonstrate a significant improvement of detection performance under extreme lighting conditions using HDR trained models.

Platform/Language information:

- For the initial evaluation of the feasibility of TMOs / Exposure fusion videos in object tracking, we are using native MATLAB codes on Microsoft Windows platform.

- The 2nd and 3rd part of the evaluation was done using a mixture of MATLAB and Python codes.

- The models used for evaluation also were partially written in MATLAB and Python, respectively.

Code available: https://github.com/MASSIVE-VR-Laboratory/ImageryEvaluation